Notice:

This post is older than 5 years – the content might be outdated.

Last time, we took a thorough look at how Android converts the Java-Side onDraw() method into a native display list on the C++-Side. This time we will go further along the Android Graphics Pipeline and take a look at how Android is drawing these display lists to the screen. We are now leaving the comfortable realm of garbage-collected Java and entering the dark and scary dungeon which is called C++. But don’t worry, we’ll keep it quite simple and only show relevant and interesting code bits.

Drawing the display list

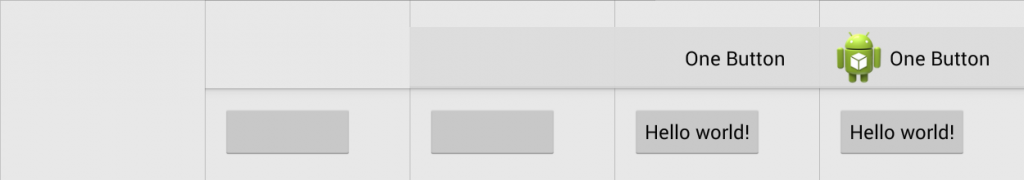

Before Android 4.3, rendering operations of the UI were executed in the same order the UI elements are added to the view hierarchy and therefore added to the resulting display list. This can result in the worst case scenario for GPUs, as they must switch state for every element. For example, when drawing two buttons, the GPU needs to draw the NinePatch and text for the first button, and then the same for the second button, resulting in at least 3 state changes.

Reordering and merging of operations

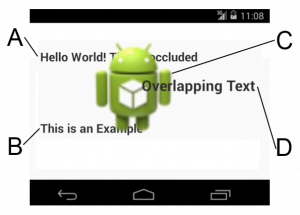

So in order to minimize the expansive state changes, Android is reordering all drawing operations based on their type and state attributes. We are leaving our example application with only one button for a moment and are now looking at a fully arbitrary activity:

As seen in image above, a simple approach to reordering and merging by type is not sufficient in most cases. Drawing all text elements and then the bitmap (or the other way around) does not result in the same final image as it would without reordering, which is clearly not acceptable.

In order to correctly render the example activity, text elements A and B have to be drawn first, followed by the bitmap C, followed by the text element D. The first two text elements could be merged into one operation, but the text element D cannot, as it would be overlapped by the bitmap.

To further reduce the drawing time needed for a view hierarchy, most operations can be merged after they have been reordered. This happens in the DeferredDisplayList, so-called because the execution of the drawing operations does not happen in order, but is deferred until all operations have been analyzed, reordered and merged.

Because every display list operation is responsible for drawing itself, an operation that supports the merging of multiple operations with the same type must be able to draw multiple, different operations in one draw call. Not every operation is capable of merging, so some can only be reordered.

The OpenGLRenderer is an implementation of the Skia 2D drawing API, but instead of utilizing the CPU it does all the drawing hardware accelerated with OpenGL. On the way trough the pipeline, this is the first native-only class implemented in C++. The renderer is designed to be used with the GLES20Canvas and was introduced with Android 3.0. It is only used in conjunction with display lists.

To merge multiple operations to one draw call, each operation is added to the deferred display list by calling addDrawOp(DrawOp). The drawing operation is asked to supply the batchId, which indicates the type of the operation it can be merged with, and the mergeIdwhich indicates the merged operations, by calling DrawOp.onDefer(...).

Possible batchIds include OpBatch_Patch for a 9-Patch and OpBatch_Text for a normal text element. These are defined in a simple enum. The mergeId is determined by each DrawOpitself, and is used to decide if two operations of the same DrawOp type can be merged. For a 9-Patch, the mergeId is a pointer to the asset atlas (or bitmap), for a text element it is the paint color. Multiple drawables from the same asset atlas texture can potentially be merged into one batch, resulting in a greatly reduced rendering time.

All information about an operation is collected into a simple struct:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

struct DeferInfo { // Type of operation (TextView, Button, etc.) int batchId; // State of operation (Text size, font, color, etc.) mergeid_t mergeId; // Indicates if operation is mergable bool mergeable; }; |

After the batchId and mergeId of an operation are determined, it will be added to the last batch if it is not mergeable. If no batch is already available, a new batch will be created. The more likely case is that the operation is mergeable. To keep track of all recently merged batches, a hashmap for each batchId is used which is called MergeBatches in the simplified algorithm. Using one hashmap for each batch avoids the need to resolve collisions with the mergeId.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 |

vector Hashmap void DeferredDisplayList::addDrawOp(DrawOp op): DeferInfo info; /* DrawOp fills DeferInfo with its mergeId and batchId */ op.onDefer(info); if(/* op is not mergeable */): /* Add Op to last added Batch with same batchId, if first op then create a new Batch */ return; DrawBatch batch = NULL; if(batches.isEmpty() == false): batch = mergingBatches[info.batchId].get(info.mergeId); if(batch != NULL && /* Op can merge with batch */): batch.add(op); mergingBatches[info.batchId].put(info.mergeId, batch); return; /* Op can not merge with batch due to different states, flags or bounds */ int newBatchIndex = batches.size(); for(overBatch in batches.reverse()): if (overBatch == batch): /* No intersection as we found our own batch */ break; if(overBatch.batchId == info.batchId): /* Save position of similar batches to insert after (reordering) */ newBatchIndex == iterationIndex; if(overBatch.intersects(localBounds)): /* We can not merge due to intersection */ batch = NULL break; if(batch == NULL): /* Create new Batch and add to mergingBatches */ batch = new DrawBatch(...); mergingBatches[deferInfo.batchId].put(info.mergeId, batch); batches.insertAt(newBatchIndex, batch); batch.add(op); |

If the current operation can be merged with another operation of the same mergeId and batchId, the operation is added to the existing batch and the next operation can be added. But if it cannot be merged due to different states, drawing flags or bounding boxes, the algorithm needs to insert a new merging batch. For this to happen, the position inside the list of all batches ( Batches) needs to be found. In the best case, it would find a batch that shares the same state with the current drawing operation. But it is also essential that the operation does not intersect with any other batches in the process of finding a correct spot. Therefore, the list of all batches is iterated over in reverse order to find a good position and to check for intersections with other elements. In case of an intersection, the operation cannot be merged and a new DrawBatch is created and inserted into the MergeBatcheshashmap. The new batch is added to Batches at the position found earlier. In any case, the operation is added to the current batch, which can be a new or an existing batch.

The actual implementation is more complex than the simplified version presented here. There are a few optimizations worth being mentioned. The algorithm is tries to avoid overdraw by removing occluded drawing operations, and also tries to to reorder non-mergeable operations to avoid GPU state changes.

Actually drawing the (deferred) display list

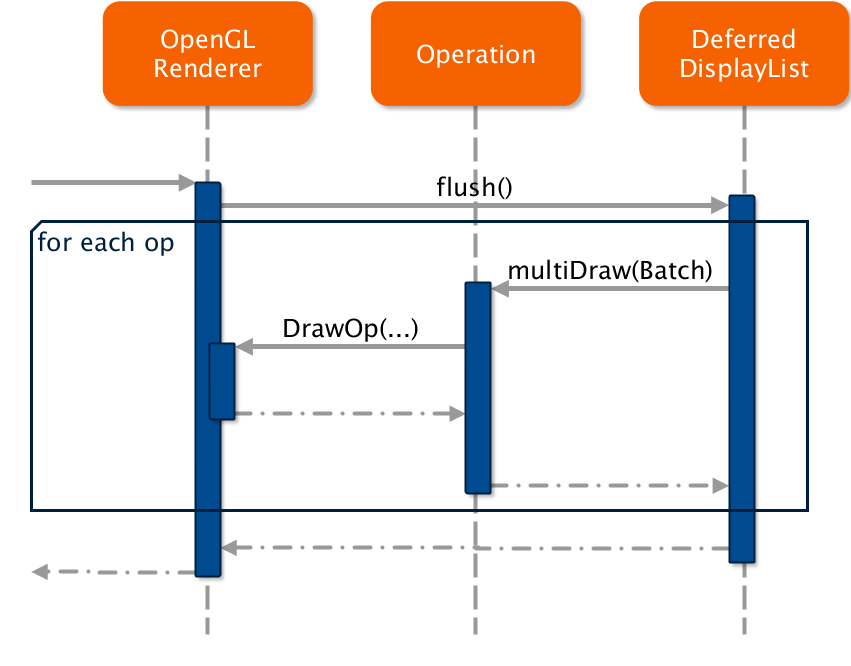

After reordering and merging the new deferred display list can finally be drawn to the screen.

Inside the OpenGLRenderers::drawDisplayList(…) method, the deferred display list is created filled with operations from the normal display list. The deferred display list is then asked to draw itself ( flush(…)).

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 |

status_t OpenGLRenderer::drawDisplayList( DisplayList* displayList, Rect& dirty, int32_t replayFlags) { // All the usual checks and setup operations // (quickReject, setupDraw, etc.) // will be performed by the display list itself if (displayList && displayList->isRenderable()) { DeferredDisplayList deferredList(*(mSnapshot->clipRect)); DeferStateStruct deferStruct( deferredList, *this, replayFlags); displayList->defer(deferStruct, 0); return deferredList.flush(*this, dirty); } return DrawGlInfo::kStatusDone; } |

The method multiDraw(…) will be called on the first operation in that list, with all the other operations as an argument. The called operation is responsible for drawing all supplied operations at once and will also call the OpenGLRenderer to actually execute the operation itself.

Display List Operations

Each drawing operation to be executed on a canvas has a corresponding display list operation. All display list operations must implement the

replay() method, which executes the wrapped drawing operation. These drawing operations call the OpenGLRenderer to render themselves. The reference to the renderer needs to be supplied when creating an operation.

onDefer() must also be implemented and must return the operation’s

drawId and

mergeId. Non-mergable batches are setting the draw id to

kOpBatch_None. Mergable operations must implement the

multiDraw() method, which is used when a whole batch of merged operations need to be rendered at once.

For example, the operation to draw a 9-Patch (called DrawPatchOp) contains the following multiDraw(…) implementation:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 |

virtual status_t multiDraw(OpenGLRenderer& renderer, Rect& dirty, const Vector // Merge all 9-Patche vertices and texture coordinates // into one big vector Vector for (unsigned int i = 0; i < ops.size(); i++) { DrawPatchOp* patchOp = (DrawPatchOp*) ops[i].op; const Patch* opMesh = patchOp->getMesh(renderer); TextureVertex* opVertices = opMesh->vertices; for (uint32_t j = 0; j < opMesh->verticesCount; j++, opVertices++) { vertices.add(TextureVertex(opVertices->position[0], opVertices->position[1], opVertices->texture[0], opVertices->texture[1])); } } // Tell the renderer to draw multipe textured polygons return renderer.drawPatches(mBitmap, getAtlasEntry(), &vertices[0], getPaint(renderer)); } |

The batchId of a 9-Patch is always kOpBatch_Patch, the mergeId is a pointer to the used bitmap. Therefore, all patches that use the same bitmap can be merged together. This is even more important with the use of the asset atlas, as now all heavily used 9-Patches from the Android framework can potentially be merged together as the reside on the same texture.

Texture Atlas

The Android start-up process zygote always keeps a number of assets preloaded which are shared with all processes. These assets are containing frequently used 9-Patches and images for the standard Android framework widgets. But up until Android 4.4, every process was keeping a seperate copy of these assets on the GPU memory. Starting with Android 4.4 KitKat, these frequently used assets are now packed into a texture atlas, uploaded to the GPU and shared between all processes. Only then is merging of 9-Patches and other drawables from the standard framework possible.

The image above shows an asset atlas texture generated on a Nexus 7 (2013) running Android 4.4, which contains all frequently used framework assets. If you look closely, the 9-Patches do not feature the typical borders which indicate the layout and padding areas. The original asset files are still used to parse these areas on system start, but they are not used for rendering any longer.

When booting a system the first time after an Android update (or ever), the AssetAtlasService is regenerating the texture atlas. This atlas is then used for all subsequent reboots, until a new Android update is applied.

To generate the atlas, the service brute-forces trough all possible atlas configurations and looks for the best one. The best configuration is determined by the maximum number of assets on the texture and the minimum texture size, which is then written to /data/system/framework_atlas.config and contains the chosen algorithm, dimensions, whether rotations are allowed and whether padding has been added. This configuration is then used in subsequent reboots to regenerate the texture atlas. A RGBA8888 graphic buffer is allocated as the asset atlas texture and all assets are rendered onto it via the use of a temporary Skia bitmap. This asset atlas texture is valid for the lifetime of the AssetAtlasService, only being deallocated when the system itself is shutting down.

To actually pack all assets into the atlas, the service starts with an empty texture. After placing the first asset, the remaining space is divided into two rectangular cells. Depending on the algorithm used, this split can either be horizontal or vertical. The next asset texture is added in the first cell that is large enough to fit. This now occupied cell will be split again and the next asset is processed. The AssetAtlasService is using multiple threads to speed up the time it takes to iterate through all combinations.

When a new app is started, its HardwareRenderer queries the AssetAtlasService for this texture and every time the renderer needs to draw a bitmap or 9-Patch it will check the atlas first.

Font caching and rendering

In order to merge text views, a similar approach is used and a font cache is generated. But in contrast to the texture atlas, this font atlas is unique for each app and font type. The color of the font can be applied in a shader and is therefore not considered in the atlas.

If you take a quick glance at the font atlas, you will instantly see that only a few characters are present. When taking a closer look, you will see only the used characters! If you think about how many languages Android supports, and how many characters are supported, only caching the used ones makes perfectly good sense. And because the action bar and the button are using the same font, all characters from both text views can be merged onto one texture.

To draw the font to the screen, the renderer needs to generate a geometry to which the texture gets bound. The geometry is generated on the CPU and then drawn via the OpenGL command glDrawElements(). If the device supports OpenGL ES 3.0, the FontRenderer will update and upload the font cache texture asynchronously at the start of the frame, while the GPU is mostly idle, which saves precious milliseconds per frame. The cache texture is implemented as a OpenGL Pixel Buffer Object, which makes a asynchronous upload possible.

OpenGL

At the start of this mini-series I promised you some raw OpenGL drawing commands. So with no further ado I present you the (not quite complete) OpenGL drawing log for the button of our simple one-button activity:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 |

glUniformMatrix4fv(location = 2, count = 1, transpose = false, value = [1.0, 0.0, 0.0, 0.0, 0.0, 1.0, 0.0, 0.0, 0.0, 0.0, 1.0, 0.0, 0.0, 0.0, 0.0, 1.0]) glBindBuffer(target = GL_ARRAY_BUFFER, buffer = 0) glGenBuffers(n = 1, buffers = [3]) glBindBuffer(target = GL_ELEMENT_ARRAY_BUFFER, buffer = 3) glBufferData(target = GL_ELEMENT_ARRAY_BUFFER, size = 24576, data = [ 24576 bytes ], usage = GL_STATIC_DRAW) glVertexAttribPointer(indx = 0, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0xbefdcf18) glVertexAttribPointer(indx = 1, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0xbefdcf20) glVertexAttribPointerData(indx = 0, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0x??, minIndex = 0, maxIndex = 48) glVertexAttribPointerData(indx = 1, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0x??, minIndex = 0, maxIndex = 48) glDrawElements(mode = GL_MAP_INVALIDATE_RANGE_BIT, count = 72, type = GL_UNSIGNED_SHORT, indices = 0x0) glBindBuffer(target = GL_ARRAY_BUFFER, buffer = 2) glBufferSubData(target = GL_ARRAY_BUFFER, offset = 768, size = 576, data = [ 576 bytes ]) glDisable(cap = GL_BLEND) glUniformMatrix4fv(location = 2, count = 1, transpose = false, value = [1.0, 0.0, 0.0, 0.0, 0.0, 1.0, 0.0, 0.0, 0.0, 0.0, 1.0, 0.0, 0.0, 33.0, 0.0, 1.0]) glVertexAttribPointer(indx = 0, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0x300) glVertexAttribPointer(indx = 1, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0x308) glDrawElements(mode = GL_MAP_INVALIDATE_RANGE_BIT, count = 54, type = GL_UNSIGNED_SHORT, indices = 0x0) eglSwapBuffers() |

The complete OpenGL draw call log can be seen at the end of this blog post.

Conclusion

We have seen how Android converts its view hierarchy to a series of render commands inside a display list, reorders and merges these commands and finally how these commands are executed.

Returning to our example activity with one button, the entire view can be rendered in just 5 steps:

- The layout draws the background image, which is a linear gradient.

- Both the ActionBar and Button background 9-Patches are drawn. These two operations were merged into one batch, as both 9-Patches are located on the same texture.

- A linear gradient is drawn for the ActionBar.

- Text for the Button and the ActionBar is drawn simultaneously. As these two views use the same font, the font rendere can use the same font texture and therefore merge the two operations.

- The application’s icon is drawn.

And there you have it, we traced all the way from the view hierarchy to the final OpenGL commands, which concludes this mini-series.

Download

The full Bachelor’s Thesis on which this article is based is available for download.

Full Listings

Display List

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 |

Start display list (0x5ea4f008, PhoneWindow.DecorView, render=1) Save 3 ClipRect 0.00, 0.00, 720.00, 1184.00 SetupShader, shader 0x5ea5af08 Draw Rect 0.00 0.00 720.00 1184.00 ResetShader Draw Display List 0x5ea64d30, flags 0x244053 Start display list (0x5ea64d30, ActionBarOverlayLayout, render=1) Save 3 ClipRect 0.00, 0.00, 720.00, 1184.00 Draw Display List 0x5ea5ad78, flags 0x24053 Start display list (0x5ea5ad78, FrameLayout, render=1) Save 3 Translate (left, top) 0, 146 ClipRect 0.00, 0.00, 720.00, 1038.00 Draw Display List 0x5ea59bf8, flags 0x224053 Start display list (0x5ea59bf8, RelativeLayout, render=1) Save 3 ClipRect 0.00, 0.00, 720.00, 1038.00 Save flags 3 ClipRect 32.00 32.00 688.00 1006.00 Draw Display List 0x5cfee368, flags 0x224073 Start display list (0x5cfee368, Button, render=1) Save 3 Translate (left, top) 32, 32 ClipRect 0.00, 0.00, 243.00, 96.00 Draw patch 0.00 0.00 243.00 96.00 Save flags 3 ClipRect 24.00 0.00 219.00 80.00 Translate by 24.000000 23.000000 Draw Text of count 12, bytes 24 Restore to count 1 Done (0x5cfee368, Button) Restore to count 1 Done (0x5ea59bf8, RelativeLayout) Done (0x5ea5ad78, FrameLayout) Draw Display List 0x5ea64ac8, flags 0x24053 Start display list (0x5ea64ac8, ActionBarContainer, render=1) Save 3 Translate (left, top) 0, 50 ClipRect 0.00, 0.00, 720.00, 96.00 Draw patch 0.00 0.00 720.00 96.00 Draw Display List 0x5ea64910, flags 0x224053 Start display list (0x5ea64910, ActionBarView, render=1) Save 3 ClipRect 0.00, 0.00, 720.00, 96.00 Draw Display List 0x5ea63790, flags 0x224053 Start display list (0x5ea63790, LinearLayout, render=1) Save 3 Translate (left, top) 17, 0 ClipRect 0.00, 0.00, 265.00, 96.00 Draw Display List 0x5ea5fe80, flags 0x224053 Start display list (0x5ea5fe80, ActionBarView.HomeView, render=1) Save 3 ClipRect 0.00, 0.00, 80.00, 96.00 Draw Display List 0x5ea5ed00, flags 0x224053 Start display list (0x5ea5ed00, ImageView, render=1) Save 3 Translate (left, top) 8, 16 ClipRect 0.00, 0.00, 64.00, 64.00 Save flags 3 ConcatMatrix [0.67 0.00 0.00] [0.00 0.67 0.00] [0.00 0.00 1.00] Draw bitmap 0x5d33ae70 at 0.000000 0.000000 Restore to count 1 Done (0x5ea5ed00, ImageView) Done (0x5ea5fe80, ActionBarView.HomeView) Draw Display List 0x5ea63618, flags 0x224053 Start display list (0x5ea63618, LinearLayout, render=1) Save 3 Translate (left, top) 80, 23 ClipRect 0.00, 0.00, 185.00, 49.00 Save flags 3 ClipRect 0.00 0.00 169.00 49.00 Draw Display List 0x5ea634a0, flags 0x224073 Start display list (0x5ea634a0, TextView, render=1) Save 3 ClipRect 0.00, 0.00, 169.00, 49.00 Save flags 3 ClipRect 0.00 0.00 169.00 49.00 Draw Text of count 9, bytes 18 Restore to count 1 Done (0x5ea634a0, TextView) Restore to count 1 Done (0x5ea63618, LinearLayout) Done (0x5ea63790, LinearLayout) Done (0x5ea64910, ActionBarView) Done (0x5ea64ac8, ActionBarContainer) Draw patch 0.00 146.00 720.00 178.00 Done (0x5ea64d30, ActionBarOverlayLayout) Done (0x5ea4f008, PhoneWindow.DecorView) |

OpenGL

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 |

eglCreateContext(version = 1, context = 0) eglMakeCurrent(context = 0) glGetIntegerv(pname = GL_MAX_TEXTURE_SIZE, params = [2048]) glGetIntegerv(pname = GL_MAX_TEXTURE_SIZE, params = [2048]) glGetString(name = GL_VERSION) = OpenGL ES 2.0 14.01003 glGetIntegerv(pname = GL_MAX_TEXTURE_SIZE, params = [2048]) glGenBuffers(n = 1, buffers = [1]) glBindBuffer(target = GL_ARRAY_BUFFER, buffer = 1) glBufferData(target = GL_ARRAY_BUFFER, size = 64, data = [64 bytes], usage = GL_STATIC_DRAW) glDisable(cap = GL_SCISSOR_TEST) glActiveTexture(texture = GL_TEXTURE0) glGenBuffers(n = 1, buffers = [2]) glBindBuffer(target = GL_ARRAY_BUFFER, buffer = 2) glBufferData(target = GL_ARRAY_BUFFER, size = 131072, data = 0x0, usage = GL_DYNAMIC_DRAW) glGetIntegerv(pname = GL_MAX_COMBINED_TEXTURE_IMAGE_UNITS, params = [16]) glGetIntegerv(pname = GL_MAX_TEXTURE_SIZE, params = [2048]) glGenTextures(n = 1, textures = [1]) glBindTexture(target = GL_TEXTURE_2D, texture = 1) glEGLImageTargetTexture2DOES(target = GL_TEXTURE_2D, image = 2138532008) glGetError(void) = (GLenum) GL_NO_ERROR glDisable(cap = GL_DITHER) glClearColor(red = 0,000000, green = 0,000000, blue = 0,000000, alpha = 0,000000) glEnableVertexAttribArray(index = 0) glDisable(cap = GL_BLEND) glGenTextures(n = 1, textures = [2]) glBindTexture(target = GL_TEXTURE_2D, texture = 2) glPixelStorei(pname = GL_UNPACK_ALIGNMENT, param = 1) glTexImage2D(target = GL_TEXTURE_2D, level = 0, internalformat = GL_ALPHA, width = 1024, height = 512, border = 0, format = GL_ALPHA, type = GL_UNSIGNED_BYTE, pixels = []) glTexParameteri(target = GL_TEXTURE_2D, pname = GL_TEXTURE_MIN_FILTER, param = 9728) glTexParameteri(target = GL_TEXTURE_2D, pname = GL_TEXTURE_MAG_FILTER, param = 9728) glTexParameteri(target = GL_TEXTURE_2D, pname = GL_TEXTURE_WRAP_S, param = 33071) glTexParameteri(target = GL_TEXTURE_2D, pname = GL_TEXTURE_WRAP_T, param = 33071) glViewport(x = 0, y = 0, width = 800, height = 1205) glPixelStorei(pname = GL_UNPACK_ALIGNMENT, param = 1) glTexSubImage2D(target = GL_TEXTURE_2D, level = 0, xoffset = 0, yoffset = 0, width = 1024, height = 80, format = GL_ALPHA, type = GL_UNSIGNED_BYTE, pixels = 0x697b7008) glInsertEventMarkerEXT(length = 0, marker = Flush) glBindBuffer(target = GL_ARRAY_BUFFER, buffer = 0) glBindTexture(target = GL_TEXTURE_2D, texture = 1) glTexParameteri(target = GL_TEXTURE_2D, pname = GL_TEXTURE_WRAP_S, param = 33071) glTexParameteri(target = GL_TEXTURE_2D, pname = GL_TEXTURE_WRAP_T, param = 33071) glTexParameteri(target = GL_TEXTURE_2D, pname = GL_TEXTURE_MIN_FILTER, param = 9729) glTexParameteri(target = GL_TEXTURE_2D, pname = GL_TEXTURE_MAG_FILTER, param = 9729) glCreateShader(type = GL_VERTEX_SHADER) = (GLuint) 1 glShaderSource(shader = 1, count = 1, string = attribute vec4 position; attribute vec2 texCoords; uniform mat4 projection; uniform mat4 transform; varying vec2 outTexCoords; void main(void) { outTexCoords = texCoords; gl_Position = projection * transform * position; } , length = [0]) glCompileShader(shader = 1) glGetShaderiv(shader = 1, pname = GL_COMPILE_STATUS, params = [1]) glCreateShader(type = GL_FRAGMENT_SHADER) = (GLuint) 2 glShaderSource(shader = 2, count = 1, string = precision mediump float; varying vec2 outTexCoords; uniform sampler2D baseSampler; void main(void) { gl_FragColor = texture2D(baseSampler, outTexCoords); } , length = [0]) glCompileShader(shader = 2) glGetShaderiv(shader = 2, pname = GL_COMPILE_STATUS, params = [1]) glCreateProgram(void) = (GLuint) 3 glAttachShader(program = 3, shader = 1) glAttachShader(program = 3, shader = 2) glBindAttribLocation(program = 3, index = 0, name = position) glBindAttribLocation(program = 3, index = 1, name = texCoords) glGetProgramiv(program = 3, pname = GL_ACTIVE_ATTRIBUTES, params = [2]) glGetProgramiv(program = 3, pname = GL_ACTIVE_ATTRIBUTE_MAX_LENGTH, params = [10]) glGetActiveAttrib(program = 3, index = 0, bufsize = 10, length = [0], size = [1], type = [GL_FLOAT_VEC4], name = position) glGetActiveAttrib(program = 3, index = 1, bufsize = 10, length = [0], size = [1], type = [GL_FLOAT_VEC2], name = texCoords) glGetProgramiv(program = 3, pname = GL_ACTIVE_UNIFORMS, params = [3]) glGetProgramiv(program = 3, pname = GL_ACTIVE_UNIFORM_MAX_LENGTH, params = [12]) glGetActiveUniform(program = 3, index = 0, bufsize = 12, length = [0], size = [1], type = [GL_FLOAT_MAT4], name = projection) glGetActiveUniform(program = 3, index = 1, bufsize = 12, length = [0], size = [1], type = [GL_FLOAT_MAT4], name = transform) glGetActiveUniform(program = 3, index = 2, bufsize = 12, length = [0], size = [1], type = [GL_SAMPLER_2D], name = baseSampler) glLinkProgram(program = 3) glGetProgramiv(program = 3, pname = GL_LINK_STATUS, params = [1]) glGetUniformLocation(program = 3, name = transform) = (GLint) 2 glGetUniformLocation(program = 3, name = projection) = (GLint) 1 glUseProgram(program = 3) glGetUniformLocation(program = 3, name = baseSampler) = (GLint) 0 glUniform1i(location = 0, x = 0) glUniformMatrix4fv(location = 1, count = 1, transpose = false, value = [0.0025, 0.0, 0.0, 0.0, 0.0, -0.001659751, 0.0, 0.0, 0.0, 0.0, -1.0, 0.0, -1.0, 1.0, -0.0, 1.0]) glUniformMatrix4fv(location = 2, count = 1, transpose = false, value = [800.0, 0.0, 0.0, 0.0, 0.0, 1205.0, 0.0, 0.0, 0.0, 0.0, 1.0, 0.0, 0.0, 0.0, 0.0, 1.0]) glEnableVertexAttribArray(index = 1) glVertexAttribPointer(indx = 0, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0x681e7af4) glVertexAttribPointer(indx = 1, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0x681e7afc) glVertexAttribPointerData(indx = 0, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0x??, minIndex = 0, maxIndex = 4) glVertexAttribPointerData(indx = 1, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0x??, minIndex = 0, maxIndex = 4) glDrawArrays(mode = GL_TRIANGLE_STRIP, first = 0, count = 4) glBindBuffer(target = GL_ARRAY_BUFFER, buffer = 2) glBufferSubData(target = GL_ARRAY_BUFFER, offset = 0, size = 576, data = [ 576 bytes ]) glBufferSubData(target = GL_ARRAY_BUFFER, offset = 576, size = 192, data = [ 192 bytes ]) glEnable(cap = GL_BLEND) glBlendFunc(sfactor = GL_SYNC_FLUSH_COMMANDS_BIT, dfactor = GL_ONE_MINUS_SRC_ALPHA) glUniformMatrix4fv(location = 2, count = 1, transpose = false, value = [1.0, 0.0, 0.0, 0.0, 0.0, 1.0, 0.0, 0.0, 0.0, 0.0, 1.0, 0.0, 0.0, 0.0, 0.0, 1.0]) glBindBuffer(target = GL_ARRAY_BUFFER, buffer = 0) glGenBuffers(n = 1, buffers = [3]) glBindBuffer(target = GL_ELEMENT_ARRAY_BUFFER, buffer = 3) glBufferData(target = GL_ELEMENT_ARRAY_BUFFER, size = 24576, data = [ 24576 bytes ], usage = GL_STATIC_DRAW) glVertexAttribPointer(indx = 0, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0xbefdcf18) glVertexAttribPointer(indx = 1, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0xbefdcf20) glVertexAttribPointerData(indx = 0, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0x??, minIndex = 0, maxIndex = 48) glVertexAttribPointerData(indx = 1, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0x??, minIndex = 0, maxIndex = 48) glDrawElements(mode = GL_MAP_INVALIDATE_RANGE_BIT, count = 72, type = GL_UNSIGNED_SHORT, indices = 0x0) glBindBuffer(target = GL_ARRAY_BUFFER, buffer = 2) glBufferSubData(target = GL_ARRAY_BUFFER, offset = 768, size = 576, data = [ 576 bytes ]) glDisable(cap = GL_BLEND) glUniformMatrix4fv(location = 2, count = 1, transpose = false, value = [1.0, 0.0, 0.0, 0.0, 0.0, 1.0, 0.0, 0.0, 0.0, 0.0, 1.0, 0.0, 0.0, 33.0, 0.0, 1.0]) glVertexAttribPointer(indx = 0, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0x300) glVertexAttribPointer(indx = 1, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0x308) glDrawElements(mode = GL_MAP_INVALIDATE_RANGE_BIT, count = 54, type = GL_UNSIGNED_SHORT, indices = 0x0) glEnable(cap = GL_BLEND) glUniformMatrix4fv(location = 2, count = 1, transpose = false, value = [1.0, 0.0, 0.0, 0.0, 0.0, 1.0, 0.0, 0.0, 0.0, 0.0, 1.0, 0.0, 0.0, 0.0, 0.0, 1.0]) glBindBuffer(target = GL_ARRAY_BUFFER, buffer = 0) glBindTexture(target = GL_TEXTURE_2D, texture = 2) glVertexAttribPointer(indx = 0, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0x696bd008) glVertexAttribPointer(indx = 1, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0x696bd010) glVertexAttribPointerData(indx = 0, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0x??, minIndex = 0, maxIndex = 80) glVertexAttribPointerData(indx = 1, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0x??, minIndex = 0, maxIndex = 80) glDrawElements(mode = GL_MAP_INVALIDATE_RANGE_BIT, count = 120, type = GL_UNSIGNED_SHORT, indices = 0x0) glGenTextures(n = 1, textures = [3]) glBindTexture(target = GL_TEXTURE_2D, texture = 3) glPixelStorei(pname = GL_UNPACK_ALIGNMENT, param = 4) glTexImage2D(target = GL_TEXTURE_2D, level = 0, internalformat = GL_RGBA, width = 64, height = 64, border = 0, format = GL_RGBA, type = GL_UNSIGNED_BYTE, pixels = 0x420cd930) glTexParameteri(target = GL_TEXTURE_2D, pname = GL_TEXTURE_MIN_FILTER, param = 9728) glTexParameteri(target = GL_TEXTURE_2D, pname = GL_TEXTURE_MAG_FILTER, param = 9728) glTexParameteri(target = GL_TEXTURE_2D, pname = GL_TEXTURE_WRAP_S, param = 33071) glTexParameteri(target = GL_TEXTURE_2D, pname = GL_TEXTURE_WRAP_T, param = 33071) glUniformMatrix4fv(location = 2, count = 1, transpose = false, value = [64.0, 0.0, 0.0, 0.0, 0.0, 64.0, 0.0, 0.0, 0.0, 0.0, 1.0, 0.0, 16.0, 38.0, 0.0, 1.0]) glBindBuffer(target = GL_ARRAY_BUFFER, buffer = 1) glVertexAttribPointer(indx = 0, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0x0) glVertexAttribPointer(indx = 1, size = 2, type = GL_FLOAT, normalized = false, stride = 16, ptr = 0x8) glBindBuffer(target = GL_ELEMENT_ARRAY_BUFFER, buffer = 0) glDrawArrays(mode = GL_TRIANGLE_STRIP, first = 0, count = 4) glGetError(void) = (GLenum) GL_NO_ERROR eglSwapBuffers() |

Get in touch

Interested in Android Development, Mobile and Embedded Systems? Have a look at our full portfolio on our website, drop us an email or call +49 721 619 021-0.

how to dump the Display List and according to OpenGL command?