This article gives an overview of the current state of the Jamstack web architecture in 2022 and an explanation of selected technologies and techniques related to the concept.

The Jamstack is a web architecture or architectural concept designed to make web applications faster, and easier to develop and scale. As explained by Julia Wayrauther in the State of the Web from 2019, the core principles of the Jamstack are pre-rendering of web pages at build time and decoupling of system elements, such as Frontend and Backend. Content Delivery Networks (CDN) are used to host the Frontend separately at the edge, while APIs such as Headless Content Management Systems (CMS) are preferred as the Backend for a better Separation of Concerns.

More than two years have passed since our last article about the Jamstack. Since then, the Jamstack’s popularity has stagnated and is no longer rising as steeply as the old numbers suggested.

There is a good reason for this – or at least I suspect there is – which I will talk about at the end. Nevertheless, the Jamstack ecosystem has progressed in many regards and several exciting innovations have been introduced since 2019, which we will take a look at in the following.

First, a selection of the latest build time optimization strategies to bring fresh content to live as fast as possible will be explained, such as Next.js’s Incremental Static Regeneration (ISR) and Netlify’s Distributed Persistent Rendering (DPR). Second, we will look at the newest advances in edge computing using the example of stateless and stateful edge functions. This article concludes, as promised, with an outlook on the future of the Jamstack.

Build time optimization – static, but not static 🏎

One of the most pressing challenges of the Jamstack has always been long build times. It is a poor user experience and can also burden the maintainer when content updates take a long time to take effect in the live app. For example, if a product is deleted in the CMS, its associated page will still be accessible in the web app until the following build process and deployment finishes. Build time optimization techniques seek to reduce build time so that content updates feel more seamless and provide a more dynamic experience to the user; two of which we will look at in more detail below.

Incremental Static Regeneration

Next.js’s ISR is a build time optimization strategy introduced with version 9.5 of the framework. The feature allows to incrementally update the content of existing pages behind the scenes as traffic comes in. With ISR, developers can choose whether a page should be rendered at build time or on-demand, and can update content on a per-page basis without rebuilding the entire site.

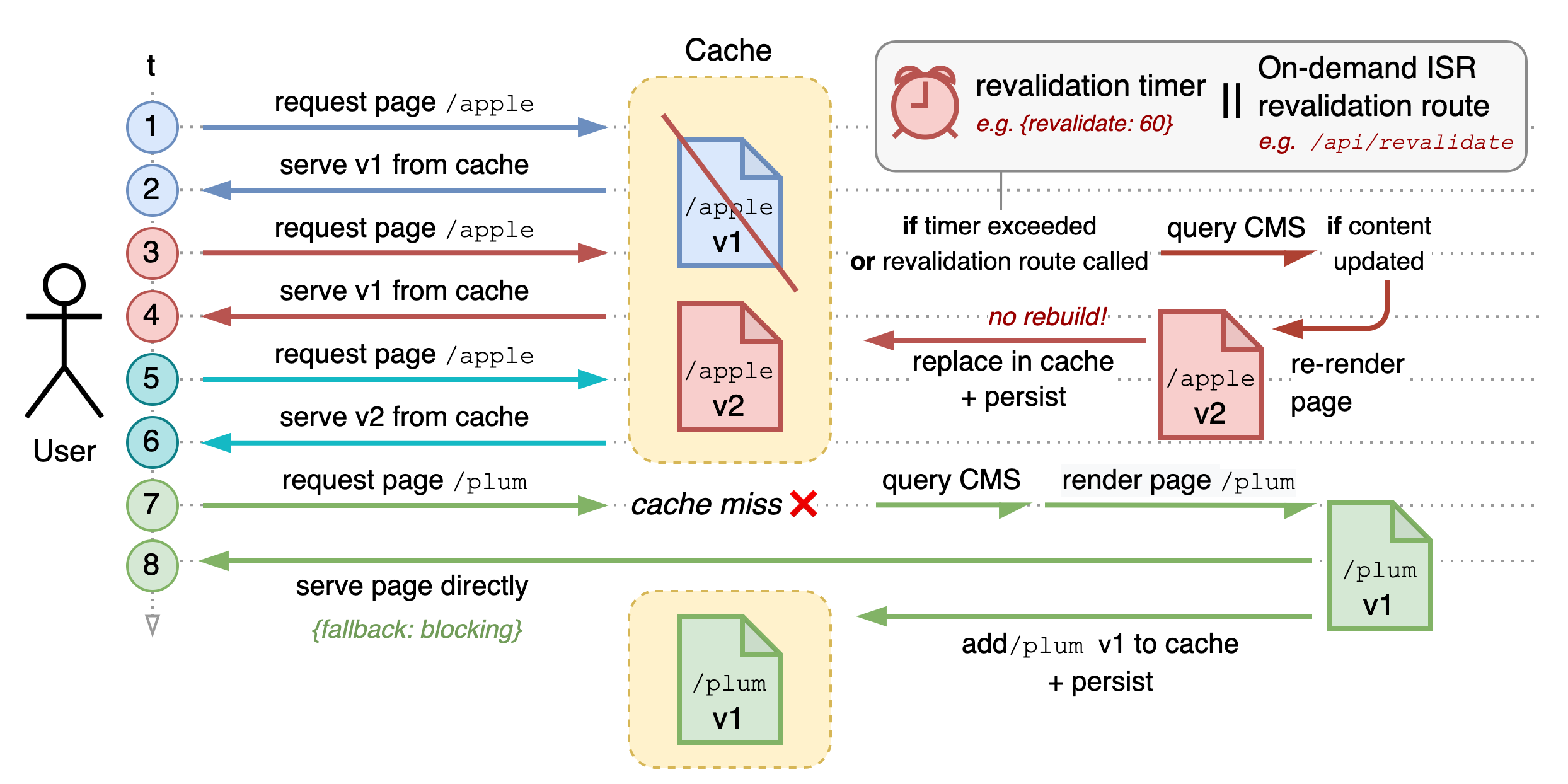

Roughly speaking, ISR works as follows: Developers define a revalidation timer per page. After this timer expires, the first incoming request for a page triggers a check to see if the page has changed since the last rendering (see 1 in the diagram below).

If yes, a new rendering of this single page is triggered and the old version of the page is replaced with the fresh version both in the cache and in the persistent storage. At the same time, the user’s request is quickly responded to with the previous version (→ 4). Pages that are excluded from rendering are rendered on-demand and then added to the set of pre-rendered pages to be reused in future requests and builds (→ 7 & 8).

This means that ISR allows incremental content updates without requiring rebuilds and redeployments. In addition, every request is served static-fast, albeit the served content may be stale. The possibility of excluding certain pages from pre-rendering and providing them on-demand gives you great flexibility.

Unfortunately, ISR is a Next.js-only feature, and the supporting platforms are also limited. For example, Vercel is the only platform that fully supports it without constraints. Furthermore, changing pages and builds after the initial deployment violates their immutability, which can hinder traceability and may cause problems if you want to roll back to a previous state.

One of the biggest criticisms of ISR has been that pages with mutual dependencies, such as a Product Overview and Product Details page, could get out of sync when using different revalidation timers. However, a few weeks ago, the new feature on-demand ISR was released that allows forcing page updates by calling a so-called revalidation route. For example, when updating the Product Overview, you can now force an update of the Product Details to keep them in sync.

In summary, ISR is a mixture of pre-rendering and just-in-time server-side rendering (SSR) that allows updating pre-rendered assets incrementally in the background. Even though it requires a running build server, requests can still be served static-fast if that server crashes, unlike with SSR. Nevertheless, this hybrid approach moves away from the Jamstack principles, according to which a web app should be deployed completely statically. Moreover, poorly implemented ISR might cause problems with consistency, although on-demand ISR mitigates this problem. Also, keep in mind that ISR only works for content and not for code updates – those still require a fresh rebuild and deployment.

ISR is a powerful technique that can be useful for small to mid-sized web apps whose update frequency exceeds blogs but does not reach the level of social media, such as in eCommerce shops. Another build-time optimization strategy is more faithful to traditional static site generation, namely the so-called Distributed Persistent Rendering (DPR).

Distributed Persistent Rendering

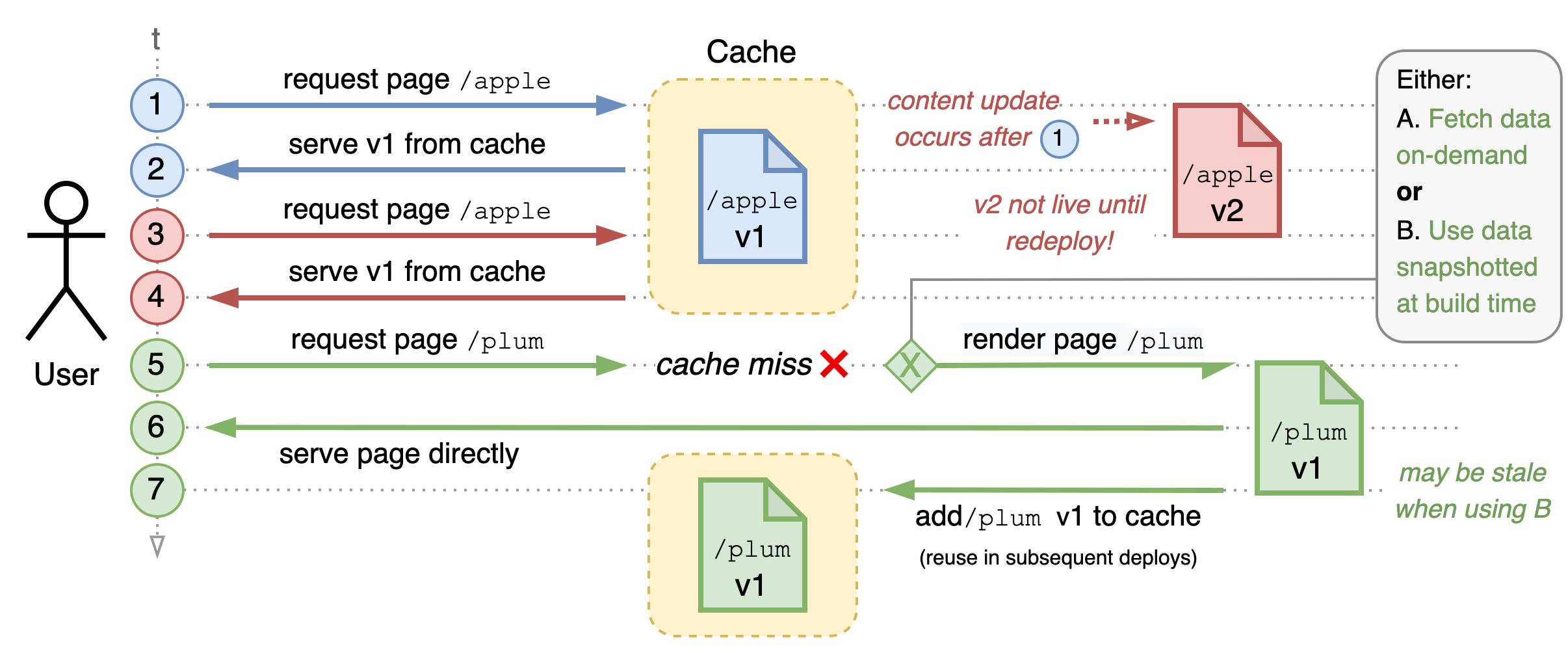

As with ISR, DPR allows excluding selected pages from the initial rendering at the beginning to reduce the build time. In contrast to ISR, no content updates are allowed for pages that have already been rendered, thus ensuring the immutability of pages and builds. With DPR, deferred pages, i.e., pages that were excluded from the initial rendering, are generated on-demand and cached but not persisted right away.

DPR is a theoretical concept developed by Netlify (see their RFC on DPR for more information). Gatsby presented an implementation of the idea in October 2021 called Deferred Static Generation (DSG). Apparently, overly complicated terms are not uncommon in the Jamstack ecosystem.

Gatsby’s DSG allows to take a snapshot of the deferred pages‘ content at the time of build and then render based on that content later. This guarantees the immutability of builds and pages.

While ISR is a good choice for dynamic use cases, DPR/DSG is ideally suited for more static use cases such as blogs or magazines because it allows deferring long-tail pages that are rarely accessed, reducing the build time by a substantial amount.

Modern build time optimization strategies such as ISR and DPR/DSG can shorten build times significantly and thus open up many new (dynamic) use cases for the Jamstack architecture while preserving the inherent benefits of static site generation such as high performance, scalability, reliability, and security – especially when combined with build caching and techniques like incremental builds. However, they often come at the cost of added complexity and might introduce vendor lock-in.

As mentioned before, Jamstack apps are statically deployed and enhanced by client-side JavaScript, e.g., via serverless APIs. Below, we look at some of the latest innovations in edge computing that allow backend functionality to be offloaded to the edge.

Edge functions – serverless on fire 🔥

Both serverless computing and edge networks are inherent parts of the Jamstack. Thus so-called edge functions achieve great synergy when combined with Jamstack apps. Edge functions, similar to cloud functions such as AWS Lambda, allow serverless code to be executed – however, not in a centralized manner but distributed across the globe over many points of presence (PoPs), as displayed in the figure below.

A distinction can be made between two types of edge functions, namely stateless and stateful edge functions, which we will cover in the following chapter.

Stateless Edge Functions

As the name suggests, stateless edge functions have no state, i.e., they have no “memory“ and do not persist any data. They allow running code and modifying requests before the server or CDN processes them. Since these scripts are executed decentrally on the edge, they are very easy to scale. In addition, edge functions also have little to no process overhead and, unlike cloud functions, are not affected by cold starts.

Edge functions have been around for a few years but have just recently begun to pick up momentum when Vercel released Next.js 12 featuring Middleware in a beta state. Middleware functions are an implementation of the aforementioned edge functions that can be deployed as such. Under the hood, they are based on Cloudflare Workers (CFW). Platforms such as Vercel or Netlify support their API without configuration and automatically deploy them as edge functions alongside the web app. They enable access to the Service Workers API on the edge. In contrast to conventional cloud functions such as AWS lambda, which run in sophisticated environments like Node.js inside a container or virtual machine, CFWs run inside V8 isolates. Isolates are lightweight contexts with their own scope and memory that group variables with the code allowed to mutate them.

A single instance of V8 can run a large number of isolates without introducing much additional process overhead.

Even though stateless edge functions lack configurability, being limited to the Service Workers API plus custom APIs depending on the platform, they are still helpful in some cases, especially since support for ECMAScript modules was added. For example, they can be used for:

- Stateless authentication, e.g., as displayed in the figure below where the token verification takes place at the edge

- Redirects and rewrites, e.g., for A/B testing, or to route a user directly to the correct locale

- Modifying headers, e.g., for feature flags

- Conditional blocks, e.g., security (bot detection, old TLS versions), region blocks, etc.

- …

Still, as stateless edge functions do not persist state, coordinated processing is not possible and individual instances cannot be explicitly addressed. However, this changes with stateful edge functions.

Stateful Edge Functions

The most advanced technology that allows for stateful processing at the edge comes from Cloudflare, the so-called Durable Objects (DOB). Instances of DOBs can be specifically targeted by clients and have their own volatile and persistent storage across multiple clients and connections. They can be used to partition the state of an app so that it corresponds to the physical memory units. For example, a shopping cart is a logical unit of a state that can be physically implemented as an encapsulated unit using DOBs. This aligns logical and physical state and many logistical tasks can then be handled by the infrastructure provider, especially with regard to scaling.

In addition, they support WebAssembly and allow to set up lengthy WebSocket connections. The latter opens up many possibilities in terms of connectivity with databases, but above all many real-time use cases, for example:

- Real-time chats, feeds, like counters, notifications, live coding, etc.

- Dynamic pricing, product placements or recommendations, etc.

- Multiplayer Gaming, as demonstrated here.

- …

In short, using DOBs for these use cases has two advantages: performance and ease of use. DOBs achieve low latency through their lightweight runtime, cold-start prevention, and global distribution while automatically adapting to changed request volumes. Furthermore, they can be used without any infrastructure configuration. Therefore, you can focus on the business logic and do not have to touch the infrastructure, even with hundreds of parallel WebSocket connections.

Despite the innovations of the last years, the environments of edge functions cannot keep up with products like AWS Lambda (yet). However, they are getting closer with each release, and tech like Deno Deploy, a third-party hosted edge Deno environment, could close or at least narrow this gap in the near future. As with the above build time optimization strategies, there is again the potential of vendor lock-in. While there are many edge function vendors, most of them only offer stateless edge functions. If you want to take advantage of DOBs, you have to rely on Cloudflare and are therefore bound to their processing limits and pricing models.

Now that we have covered a few of the most recent Jamstack-related innovations let’s wrap up and talk about the possible future of the architecture.

Jamstack – too capable for its own good? 🧐

Since the term Jamstack was coined, architecture has had a rather niche existence. Initially, the Jamstack was associated only with static websites but this has changed over time. We have learned that even very dynamic use cases are now possible on the Jamstack without deviating too much from its principles. On top of blogs, portfolios, magazines, or similar, now even webshops or news apps are quite possible due to innovations such as the ones mentioned above and allow to benefit from all Jamstack advantages without being too much affected by its traditional constraints. However, there are still clear limits to the level of dynamism that can be achieved – especially regarding the content update frequency. Not even the most modern techniques and technologies can keep up with hundreds of updates per second. This means that use cases such as marketplaces and social media are out-of-reach.

Another significant problem is that the Jamstack has become more capable but also more complicated. Many innovations are trade-offs, e.g., between pre-rendering and on-demand rendering as in ISR. As a result, the architecture is becoming less opinionated and the lines between apps that are referred to as Jamstack and those that do not fit the definition are blurring increasingly. Talking about the Jamstack now quickly drifts into abstract discussions, losing sight of the real goal – a straightforward architecture that promotes fast, uncomplicated development and provides a good user experience. I predict that if this trend continues, the label “Jamstack“ could lose its usefulness.

However, this does not mean that its principles are therefore void – on the contrary. There is a clear trend towards static, e.g., Next.js – an originally SSR-only framework. It has introduced pre-rendering as a default rendering option, and edge computing is just picking up steam. The API economy is also flourishing, and more and more business models based on specialized APIs are emerging.

2022 could be the year that determines whether Jamstack can sustain itself or ultimately perish, depending on the direction the community takes the concept.