Notice:

This post is older than 5 years – the content might be outdated.

For a successful scientific research project, especially when handling vast amounts of experimental data, many people need to be able to contribute at the same time. This makes a centrally accessible working station inevitable.

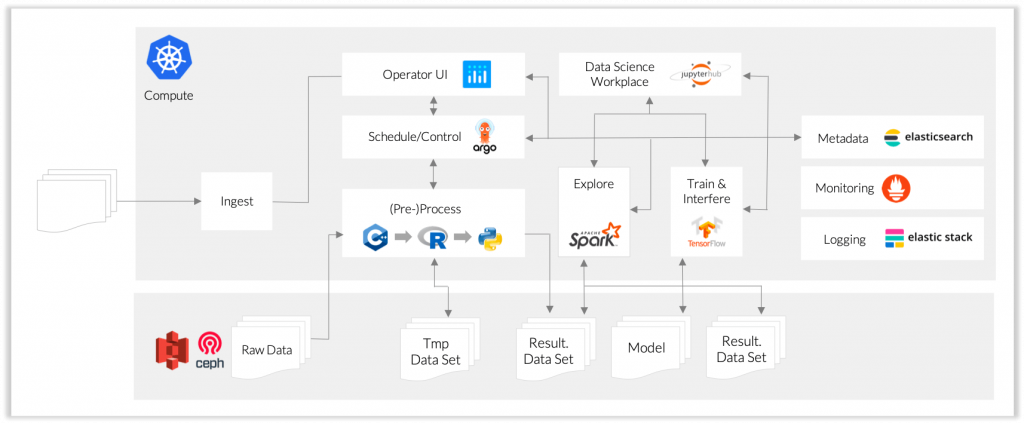

With the goal in mind to help research teams coordinate their data more efficiently, we developed a data analysis platform at scale. This blog post shows how we made use of virtualization through Kubernetes and Docker to be able to deploy a data platform regardless of specific, required hardware, while using Argo as a workflow manager. At the core of our platform lies an omniscient metadata management system implemented in Elasticsearch. It allows quick access to the metadata in every section of the workflow pipeline.

Motivation

Coming from an academic and scientific background myself, I can speak from experience when I say that a centrally accessible working platform can help (in some of these projects: could have helped) collaborating on scientific research projects. Generally, such a central platform to store, share, manipulate, and analyze data can aid everyone involved and ultimately enhances research success.

Having a central working station allows all members of a research team to perform their individual tasks unimpeded and without delay. Adding proper metadata management will make their work more easily reproducible and comprehensible. Moreover, it allows for data to be stored and be accessible in a comprehensive manner for all newly arriving project members.

In our example project EM²Q, one can think of different typical user roles which we need to support: Data scientists, platform administrators, and lab technicians:

- Data scientists explore measured data, develop new algorithms or improve existing ones for analysis and evaluation on the datasets, and propose their procedures as new workflow descriptions.

- Administrators provide new workflow specifications and docker images on the basis of previously composed workflow steps and applications by the data scientists.

- Thirdly, there are lab technicians, who operate physical experiments and store the data along with a meaningful description of the experimental setup. They then perform the aforementioned, standardized workflows to analyze and evaluate the experimental data and get a first estimate of the quality of the experiments before more sophisticated analyses can be run on the data.

With multiple users working on the system at the same time, scalability is a crucial aspect of the platform. Especially, when the whole project is about handling big data.

Here, Kubernetes saves the day! In the following, I would like to introduce how we set up a central data collaboration platform on a Kubernetes cluster and what modules we used to manage the data flow.

In our project, we dealt with imaging mass spectrometry data. These datasets can contain thousands of mass spectra for the spatial resolution of a biological tissue sample. One of these spectra contains hundreds of thousands of intensity values describing the concentration of molecules at different locations.

So naturally, one challenge is the efficient processing of these vast amounts of data. Another challenge that arises is comparability and reproducibility of experiments: One single measurement is already characterized by a large number of parameters describing the spectrometer setting. When performing various processing and analysis tasks on these data, additional parameterization for these data analysis workflows is introduced which we also need to keep track of.

However, it should be noted, the focus of this article lies not on the actual data processing steps. There are different tools for that which we also used in our workflows (e.g. MALDIquant, OpenMS). This article rather gives an overview of the different components and their interplay in our project.

Our goal is to help research teams to keep a better overview of each parameter set used in each measurement and make comparisons of experiments within as well as between research teams easier.

What do we need to realize this?

Basic Setup

First and foremost, we need someplace to build our whole infrastructure. So naturally, we will employ a Kubernetes cluster, along with Helm, a package installer for Kubernetes.

Then, we need to set up a distributed storage solution for the actual data sets. This is already the first example of our modular approach: Many solutions exist for different tasks and we are not limited to a particular one. In the case of distributed storage, for example, we might choose between NFS, CEPH, or HDFS among others.

All of this serves as the foundation of our data platform. Now for the main parts: we first need a workflow manager such as argo in order to „get stuff done with kubernetes“ (https://argoproj.github.io/) (For a comparison of workflow managers, see Evaluation von Data Science Workflow Engines für Kubernetes, Bachelor Thesis by David Schmidt. Only available in German).

Argo allows us to describe workflow pipelines through declarative YAML files. Here, we can specify dependencies between the processing containers of each workflow step and can also define the scaling in each step. Upon starting a workflow, all this information is automatically passed to the Kubernetes scheduler. This allows us to automate certain standard workflows – such as data conversion or measuring certain quality metrics – and also help make these workflows reproducible.

Since Argo also makes use of Docker containers in every workflow step, we are not restricted to a specific programming language. Hence, we can choose from many different libraries and modules to define the algorithms in every single step of the pipeline.

To parallelize the execution of workflows, we decided to split the base dataset of mass spectrometry data into multiple instances, each of them responsible for a predefined number of mass spectra. (To find out more about parallelized data processing and learn about more advanced techniques and libraries for this purpose, we’ll publish another article on that shortly.)

When defining the different roles in our research team, the platform administrator certainly has the task of specifying the workflows.

Metadata

(This part is strongly based on Metadatenmanagement für Data Science Workflows auf Kubernetes, Bachelor Thesis by Kevin Exel. Only available in German).

To keep track of the results we produced in the argo workflows mentioned above, we also introduce a metadata system which is storing information of all the data moving around in our cluster.

This will help us memorize where all the results of a pipeline are being saved and with which parameterization we conducted a certain workflow. Also, and maybe more importantly, it helps us to keep track of where to find a certain dataset when executing a workflow on a particular data set.

For our purposes, we used elasticsearch as the fundamental metadata storage. Elasticsearch allows us to search for and access certain metadata quickly. This is a critical requirement for our platform, as we are going to request metadata on many occasions; be it on a workflow execution, or to simply show relevant data of one particular dataset to a user.

For better structure and to be able to communicate with elasticsearch more easily in multiple programming languages, we use Apache Thrift as an interface language.

Thrift also allows us to define a schema for structuring the metadata.

Structuring the data in a predefined format has the additional benefit that it makes creating search queries specific to the data at hand much easier.

Web Interface

Finally, to allow all users to collectively work on a project, we set up a user interface. Here, relevant data of all experiments, studies and corresponding measurements, spectra and its subdivisions are displayed. Besides, it is also possible to start predefined workflows from the UI with customizable parameterization.

We set up the UI using Flask for python and it incorporates Dash (and Plotly) elements for data visualization.

The UI provides a working place for different user roles:

- A lab technician may upload and provide the raw dataset from the spectrometer and define the proper specification of a measurement.

- A data analyst or data scientist may then use the provided datasets and perform exploratory data analysis. For this purpose, we also included the possibility of investigating the desired dataset in an integrated JupyterHub environment (see below). By doing this, the data analyst might come up with new, useful data analysis routines which can then be used to define new processing workflows.

- Then, a platform administrator can define new argo workflows to incorporate the ideas of both the technician and the analyst and make these workflows available for execution in the UI.

To realize this, the administrator can define Docker containers with the corresponding executable file containing the desired functions. With this, an argo-yaml file can be defined which uses these Docker containers along with the needed parameterization.

Information about the workflow then needs to be included in the UI.

Afterwards, the lab technician might want to execute and evaluate a data processing quality measuring workflow to gain feedback on how well the results for certain specifications actually are, before moving on to actual data analysis workflows.

JupyterHub

For the development of data analysis tasks or for creating predictive models based on the mass spectra images, we provide a JupyterHub platform. In Jupyter, a user can access a snippet of a previously selected dataset from the UI to gain a better understanding of the data at hand and how different algorithms act on the spectra.

There, a data analyst can experiment with different parameter settings, for example, to find the perfect smoothing parameters in preprocessing steps. After they are happy with the results, the data analyst can save the specification to the metadata system from Jupyter. The following, distributed workflow executions can then load and use this specific parameterization.

Summary

In our project (EM²Q), we developed a scalable, distributed data analysis platform to analyze large biological datasets. Here, we made use of virtualization through Kubernetes and Docker to be able to deploy this platform regardless of the specific, required hardware. Our modular approach also helps to incorporate a multitude of solutions and workflows more easily. A central, underlying metadata system can be thought of as having a birds-eye perspective and administering all relevant information describing data sets and workflow results.

Lastly, a web interface has been set up to add datasets, give an overview of existing ones, start workflows, and show their results. From here, users can also jump to JupyterHub to explore the data of a selected data set.

Cooperative project

The project is being funded by the German Federal Ministry of Economics and Technology as a cooperative project between inovex and Mannheim University of Applied Sciences. It is part of the German Central Innovation Programme for small and medium-sized enterprises.